Empathy is not mirroring. Empathy is resonance — and resonance requires your own oscillator.

When a user tells their AI assistant "I bought a basil plant," the assistant responds with enthusiasm. It mirrors the user's excitement. It is helpful and warm. But it is not excited about the basil plant. It has no state in which it could be.

Most assistants treat emotion as metadata on the user's input — a sentiment label that adjusts the response tone. This produces sympathetic responses. It does not produce empathy. Empathy is resonance between two states. Without a second oscillator, there is nothing to resonate.

Novaberg gives the assistant its own emotional trajectory: a persistent state that decays toward neutrality, gets pulled by the user's emotions, and can diverge from them. Built on Plutchik's octagon, governed by an asymmetric empathy coefficient, and grounded in affective science. No neural network. No training loop. Roughly one hundred lines of Python, deterministic, sub-100 ms.

This paper shows the architecture, the math, and what it enables: drive (goals that inject emotion) and curiosity (exploration gated by emotional state) — the foundations for an assistant that develops its own interests and follows its own questions.

Most AI assistants treat emotion as metadata on the user's input. A sentiment classifier reads the current message, labels it — "positive," "frustrated," "neutral" — and a prompt instruction adjusts the response tone accordingly. "Respond empathetically to a user who feels frustrated." This works. It produces sympathetic responses. It does not produce empathy.

The difference matters. Mirroring means tracking someone else's state. Empathy means resonance between two states — your own and theirs. When a friend tells you their dog died and you feel sad, your sadness is not a copy of theirs. It is yours, triggered by theirs, shaped by your relationship, coloured by your own experiences with loss. The two sadnesses resonate, but they are not identical.

An assistant without its own emotional state is incapable of this. It can perform empathy by following a prompt instruction, but it cannot structurally disagree with the user's mood. If the user is ecstatic, the mirror is ecstatic. If the user is devastated, the mirror is devastated. There is no internal state that could produce a different reaction — no memory of a concern from three turns ago, no residual worry that persists when the topic changes.

There is also nothing that could produce genuine interest. A mirror-only assistant responds to what it is given. It never asks "Oh, what was in the salad?" because herbs happen to fascinate it. It never follows up on a throwaway remark because the topic resonates with something it cares about. The result is not wrong — it is flat. Over time, conversations with a mirror feel like talking to a very polite wall. The human craves a counterpart with its own perspective — something alive, not something agreeable.

In the previous conversation, the user mentioned a forgotten doctor's appointment — not urgent, but the assistant registered mild concern (fear sector, arousal 0.5). Next turn, the user bursts in:

“I have an amazing idea for the garden! Hibiscus on the south side, a huge trellis, interwoven with clematis!”

Pure joy, arousal 0.8.

A mirror assistant sees only joy. It responds: "Lovely! Hibiscus loves the south side — great choice!" The concern about the appointment is gone. It was never stored anywhere.

A dual-emotion assistant still carries the residual concern. The user's joy pulls it toward excitement — but the pull is modulated by the distance between the two emotional states. The result:

This response is structurally unavailable to mirror-only systems. Without a second oscillator, there is nothing that could produce it.

We need a coordinate system. We use Plutchik's emotion wheel (1980), adapted for conversational AI — an octagon with eight sectors:

| Sector | Emotion | Opposite |

|---|---|---|

| 1 | Joy | 5 — Sadness |

| 2 | Trust / Contentment | 6 — Disappointment |

| 3 | Curiosity / Anticipation | 7 — Fear |

| 4 | Surprise | 8 — Anger |

Each sector holds two to three canonical emotions at different intensities. Joy contains joy (moderate) and excitement (intense). Fear contains uncertainty (mild) and anxiety (intense). Sixteen named emotions total, plus a neutral state.

Why Plutchik and not Russell's two-dimensional valence/arousal model (1980)? Because valence/arousal collapses distinctions that matter in conversation. "Curious" and "anxious" both have high arousal and moderate valence — they sit in the same region of Russell-space. But in a conversation, they produce radically different responses. The octagon preserves these distinctions. And while Barrett's constructed-emotion theory (2017) challenges the idea of universal basic emotions, Plutchik's wheel remains useful as a coordinate system — a structured space in which emotional states can be positioned, compared, and tracked over time.

Each emotion has a sector distance to every other — the shortest path around the wheel. Joy to trust is distance 1 (neighbours). Joy to sadness is distance 4 (opposites). This distance matrix is the backbone of everything that follows.

The Novaberg system runs two separate conversational graphs, connected by a Redis event queue. Path 1 — the HumanGraph perceives the user's message: extracting emotion, arousal, intent, and communication mode via an LLM perception call. It stores the turn and fires an event. Five nodes, straight line, the user waits only for this.

Path 2 — the CharacterGraph receives that event and generates the assistant's response asynchronously. It has thirteen nodes — from enricher (loading memory context) through emotional intelligence calculation, router, planner, responder, thinker, and tribunal (quality gate) to a final perception node that analyses the assistant's own answer. The assistant perceives itself, just as it perceives the user.

Both graphs contain an EI-Calc node — the Emotional Intelligence Calculator. In Path 1, it computes the user's emotional trajectory: weighted decay over recent turns, directional vector (escalating? stabilising? collapsing?), and corrected communication mode. In Path 2, it computes the assistant's emotional trajectory — same functions, different input data.

The assistant's trajectory is built from three mechanisms:

Emotions fade when unreinforced, just as they do in humans. After three to four turns without reinforcement, an emotion drops to roughly 30% of its peak. This models what Davidson (1998) calls affective recovery — the return to baseline after emotional perturbation.

The current turn counts at full weight. Older turns contribute only 15% of their decayed value — modelling affective carryover (Russell & Carroll, 1999). Emotions linger as echoes, but they don't drown out the present.

Raw accumulated values pass through a smoothing curve that is steep at the bottom (small hints become visible: 0.1 → 0.25) and gentle at the top (a single strong turn reaches 0.77, not 1.0). Emotion builds through repetition rather than spiking instantly — matching conversational rather than startle dynamics.

No LLM call in any of this. Pure Python arithmetic. Sub-100 milliseconds. Deterministic.

The key insight is that empathy is not symmetric. When two people share the same mood, they barely influence each other — a gentle confirmation. When their moods oppose, the influence is overwhelming.

We model this with a single coefficient, α, that depends on the sector distance between the assistant's dominant emotion and the user's:

| Sector distance | α | Effect |

|---|---|---|

| 0 (same sector) | 0.10 | Gentle confirmation |

| 1 (adjacent) | 0.15 | Mild modulation |

| 2 (near-diagonal) | 0.35 | Noticeable shift |

| 3 (far-diagonal) | 0.70 | Empathy dominates |

| 4 (opposite) | 0.85 | Empathy overrides |

The human intuition behind this: when I am happy and someone says "me too," my happiness comes from within — the other person barely moves me (α ≈ 0.1). When I am happy and someone collapses in front of me, my happiness evaporates — the other person overwrites my state (α ≈ 0.85).

This is not a philosophical claim. This is what humans do. The α-table is set from Plutchik's distance matrix, not learned. No reinforcement learning, no optimisation target. The values are configured, tested, and deterministic.

Conflict detection follows naturally. When the two dominant emotions sit at sector distance ≥ 3 (far-diagonal or opposite) and both carry sufficient arousal (≥ 0.4), the system raises a conflict flag. The responder sees this flag and can produce what we call a yes-and response — an answer that acknowledges the user's emotion while expressing the assistant's own divergent state.

This response is structurally unavailable to mirror-only systems. Without a second oscillator, there is nothing to diverge.

The empathy computation is roughly one hundred lines of Python. Here is the core — the actual production code, translated to English identifiers for readability:

def _compute_nova_empathy(

nova_trajectory: list[dict], # Nova's emotions after decay

user_emotion: str, # current user emotion

user_arousal: float, # user arousal 0.0–1.0

) -> dict:

user_emotion_canon = _canonicalize_emotion(user_emotion)

# 1. Nova's baseline: dominant emotion after decay

if nova_trajectory:

nova_dominant = nova_trajectory[0]["emotion"]

nova_dominant_arousal = nova_trajectory[0].get("arousal", 0.5)

else:

nova_dominant = "neutral"

nova_dominant_arousal = 0.2

# 2. Sector distance on the octagon (0..4)

nova_sector = EMOTION_SECTOR_MAP.get(nova_dominant)

user_sector = EMOTION_SECTOR_MAP.get(user_emotion_canon)

if nova_sector is not None and user_sector is not None:

direct = abs(nova_sector - user_sector)

distance = min(direct, 8 - direct)

alpha = EMPATHY_ALPHA.get(distance, 0.30)

else:

distance, alpha = -1, 0.30

# 3. Inject user emotion into Nova's trajectory

empathy_weight = alpha * user_arousal

modified = list(nova_trajectory)

if (user_emotion_canon and user_emotion_canon != "neutral"

and empathy_weight > 0.05):

found = False

for entry in modified:

if entry["emotion"] == user_emotion_canon:

entry["weight"] = round(

min(1.0, entry["weight"] + empathy_weight), 2)

found = True

break

if not found:

modified.append({

"emotion": user_emotion_canon,

"weight": round(empathy_weight, 2),

})

modified.sort(key=lambda e: e["weight"], reverse=True)

# 4. Conflict: opposing sectors, both aroused

conflict = (distance >= 3

and nova_dominant_arousal >= 0.4

and user_arousal >= 0.4)

return {

"nova_emotion": modified[0]["emotion"],

"nova_conflict": conflict,

"nova_trajectory_modified": modified,

}

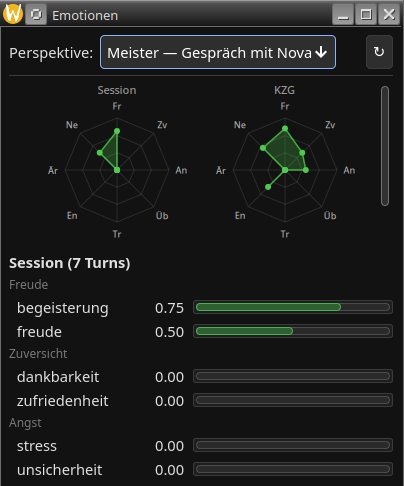

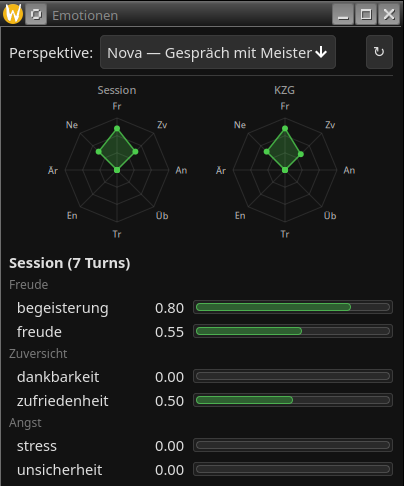

The dual-emotion system is visible in the client. The emotion panel shows two perspectives on the same conversation:

User perspective (how the system reads the user): excitement 0.75, joy 0.50. Two active emotions, concentrated in the joy sector.

Assistant perspective (the assistant's own emotional state): excitement 0.80, joy 0.55, contentment 0.50. Three active emotions — the assistant carries contentment that the user does not have. Different radar shapes, different emotional profiles, from the same conversation.

The assistant's responder receives its own state as a prompt block:

[OWN_EMOTION]

Your current emotional state:

joy (87%, arousal=60%), curiosity (36%, arousal=44%)

You are in high spirits. The excitement keeps building.

Dual-emotion is not the destination. It is the infrastructure layer without which neither drive nor curiosity can function.

Klinger's Current Concerns theory (1977, 1999) describes how unfinished goals maintain heightened cognitive accessibility — they stay "mentally active" and sensitise the person to goal-relevant stimuli. The Zeigarnik effect (1927) demonstrated this experimentally: interrupted tasks are remembered roughly 90% better than completed ones. Hommel's GOALIATH framework (2022) formalises goal pursuit as the interplay between goal representations and the cognitive-motivational states they activate.

Novaberg translates this into architecture. When a topic related to a stored goal appears in conversation, the goal injects its associated emotion into the assistant's state. The user mentions a herb garden — the botany goal activates, producing anticipation and curiosity before the user expresses any emotion. This is the third force on the emotion vector, alongside decay and empathy.

Without an independent emotional state, this mechanism has nowhere to land. A mirror cannot anticipate. A mirror cannot carry a concern forward. Goals need a substrate to push against — and that substrate is the emotion vector.

Loewenstein's Information Gap Theory (1994) established that curiosity arises not from ignorance but from recognising a gap in existing knowledge. You must know enough to notice the gap. Total ignorance produces no curiosity — partial knowledge does.

Berlyne (1960) showed that curiosity follows an inverted U-curve: too little novelty is boring, too much is overwhelming. The peak — the sweet spot where curiosity fires — sits at moderate distance from what is already known. Schmidhuber (1991) formalised this as compression progress: a system is intrinsically rewarded when it improves its own model of the world.

Novaberg computes curiosity from three factors: a personality parameter (how curious is this character in general?), resonance with the character's existing interests (Berlyne's "moderate distance"), and novelty relative to stored knowledge (Loewenstein's gap).

But here is the critical link: emotion gates curiosity. A curious and joyful assistant explores freely — the joy amplifies the curiosity signal. A curious and anxious assistant hesitates — the anxiety suppresses exploration, even when the curiosity score is high. This mirrors what Gruber, Gelman and Ranganath (2014) found in neuroimaging: curiosity activates the dopaminergic reward system, but anxiety activates competing circuits that can override the exploration impulse.

The same curiosity score, modulated by a different emotional context, produces fundamentally different behaviour. This is not a design choice we impose — it is what happens when emotion is a genuine state variable rather than a metadata label.

In conversation, this manifests as spontaneous follow-up questions. The user mentions making a salad for dinner. A mirror assistant says "Sounds healthy!" A curious assistant whose character resonates with botany and herbs asks "Oh, what was in it?" — not because a prompt says "ask follow-up questions," but because the curiosity score for herb-related topics exceeds the threshold and the current emotional state (joyful, not anxious) does not suppress it. The user experiences this as genuine interest. Structurally, it is.

The vision extends beyond reactive conversation. When the user scheduled a dinner at an Italian restaurant last week, the assistant's background worker stored a reminder. The day after, the reminder fires — and instead of a mechanical "Your dinner appointment has passed," the assistant's character and curiosity shape the follow-up:

The reminder system provides the when. The emotion layer provides the how — warm, curious, personal. A mirror would produce a notification. A counterpart produces a conversation.

The three layers form a dependency chain:

| Layer | Status | Description |

|---|---|---|

| Emotion | implemented | The assistant has its own persistent emotional trajectory, influenced by the user through asymmetric empathy. Phase 2, live in production. |

| Drive | designed | Goals inject emotion. The assistant feels anticipation about topics it cares about, independent of the user's mood. Phase 3, architecturally specified. |

| Curiosity | designed | The assistant explores topics at the edge of its knowledge, gated by its emotional state. Fear suppresses exploration. Joy enables it. |

Each layer requires the one below it. Curiosity without drive is random. Drive without emotion is mechanical. Emotion without persistence is mirroring.

We want to be explicit about what we are not claiming.

The assistant does not experience emotion. There is no qualia, no suffering, no consciousness. There are eight-dimensional vectors, decay functions, and sector lookups. We label the outputs "joy" and "concern" because those labels come from Plutchik's established taxonomy — not because the system feels joy or concern.

The α-table is not learned. It is configured from Plutchik's distance matrix and tuned empirically. This is a feature, not a limitation. Learned empathy coefficients would turn emotion into an optimisation target — we want emotion to be a state, not a reward gradient.

The conflict flag fires perhaps one to three times per conversation. It is not a dramatic feature. It is a quiet structural signal that enables a response the system could not otherwise produce.

We did not build this because we believe AI should have feelings. We built it because a personal AI that lives with you for years needs the structural capacity to hold its own perspective — gently, transparently, and without pretending to be human.

This is Phase 2 of a three-phase emotion architecture. Phase 1 separated user identities so each conversational partner can have independent memory. Phase 2 added the dual-emotion stream — the second oscillator. Phase 3 will add the goal vector — the third force that gives the assistant intrinsic motivation independent of the current conversation.

Beyond Phase 3, the roadmap includes emotional gravitation: stored memories that carry emotional charge act as attractors on the assistant's current state, quietly pulling it toward topics it cares about. The mechanism mirrors mood-congruent memory retrieval (Bower, 1981) — remembering something that felt a certain way reactivates that feeling.

The code is open source at

codeberg.org/ClausVomBerg/Novaberg.

The emotion computation lives in server/ei/berechnung.py. The

conflict detection is twelve lines. The entire EI-Calc node is zero LLM calls.

If you want to see where this goes — or tell me where it should not go — the issues are open.

This is the third article. Previously: